Summary: A new study explains how the brain excels at recognizing objects in both color and black-and-white images. Researchers found that early exposure to limited color information forces the brain to rely on luminance for object recognition.

This early adaptation allows the brain to later incorporate color information without losing the ability to recognize images in grayscale. The findings offer insights into visual development and could explain challenges faced by children who regain sight after congenital cataracts.

Key Facts:

- Early Adaptation: Limited color information in newborns helps the brain develop the ability to recognize objects based on luminance.

- Dual Proficiency: The brain’s early adaptation to luminance allows for later incorporation of color information without losing grayscale recognition abilities.

- Implications for Sight Restoration: Children who regain sight after congenital cataracts may struggle with black-and-white object recognition due to an overreliance on color cues.

Source: MIT

Even though the human visual system has sophisticated machinery for processing color, the brain has no problem recognizing objects in black-and-white images. A new study from MIT offers a possible explanation for how the brain comes to be so adept at identifying both color and color-degraded images.

Using experimental data and computational modeling, the researchers found evidence suggesting the roots of this ability may lie in development.

Early in life, when newborns receive strongly limited color information, the brain is forced to learn to distinguish objects based on their luminance, or intensity of light they emit, rather than their color.

Later in life, when the retina and cortex are better equipped to process colors, the brain incorporates color information as well but also maintains its previously acquired ability to recognize images without critical reliance on color cues.

The findings are consistent with previous work showing that initially degraded visual and auditory input can actually be beneficial to the early development of perceptual systems.

“This general idea, that there is something important about the initial limitations that we have in our perceptual system, transcends color vision and visual acuity.

“Some of the work that our lab has done in the context of audition also suggests that there’s something important about placing limits on the richness of information that the neonatal system is initially exposed to,” says Pawan Sinha, a professor of brain and cognitive sciences at MIT and the senior author of the study.

The findings also help to explain why children who are born blind but have their vision restored later in life, through the removal of congenital cataracts, have much more difficulty identifying objects presented in black and white.

Those children, who receive rich color input as soon as their sight is restored, may develop an overreliance on color that makes them much less resilient to changes or removal of color information.

MIT postdocs Marin Vogelsang and Lukas Vogelsang, and Project Prakash research scientist Priti Gupta, are the lead authors of the study, which appears today in Science. Sidney Diamond, a retired neurologist who is now an MIT research affiliate, and additional members of the Project Prakash team are also authors of the paper.

Seeing in black and white

The researchers’ exploration of how early experience with color affects later object recognition grew out of a simple observation from a study of children who had their sight restored after being born with congenital cataracts. In 2005, Sinha launched Project Prakash (the Sanskrit word for “light”), an effort in India to identify and treat children with reversible forms of vision loss.

Many of those children suffer from blindness due to dense bilateral cataracts. This condition often goes untreated in India, which has the world’s largest population of blind children, estimated between 200,000 and 700,000.

Children who receive treatment through Project Prakash may also participate in studies of their visual development, many of which have helped scientists learn more about how the brain’s organization changes following restoration of sight, how the brain estimates brightness, and other phenomena related to vision.

In this study, Sinha and his colleagues gave children a simple test of object recognition, presenting both color and black-and-white images.

For children born with normal sight, converting color images to grayscale had no effect at all on their ability to recognize the depicted object.

However, when children who underwent cataract removal were presented with black-and-white images, their performance dropped significantly.

This led the researchers to hypothesize that the nature of visual inputs children are exposed to early in life may play a crucial role in shaping resilience to color changes and the ability to identify objects presented in black-and-white images.

In normally sighted newborns, retinal cone cells are not well-developed at birth, resulting in babies having poor visual acuity and poor color vision. Over the first years of life, their vision improves markedly as the cone system develops.

Because the immature visual system receives significantly reduced color information, the researchers hypothesized that during this time, the baby brain is forced to gain proficiency at recognizing images with reduced color cues.

Additionally, they proposed, children who are born with cataracts and have them removed later may learn to rely too much on color cues when identifying objects, because, as they experimentally demonstrated in the paper, with mature retinas, they commence their post-operative journeys with good color vision.

To rigorously test that hypothesis, the researchers used a standard convolutional neural network, AlexNet, as a computational model of vision. They trained the network to recognize objects, giving it different types of input during training.

As part of one training regimen, they initially showed the model grayscale images only, then introduced color images later on. This roughly mimics the developmental progression of chromatic enrichment as babies’ eyesight matures over the first years of life.

Another training regimen comprised only color images. This approximates the experience of the Project Prakash children, because they can process full color information as soon as their cataracts are removed.

The researchers found that the developmentally inspired model could accurately recognize objects in either type of image and was also resilient to other color manipulations.

However, the Prakash-proxy model trained only on color images did not show good generalization to grayscale or hue-manipulated images.

“What happens is that this Prakash-like model is very good with colored images, but it’s very poor with anything else.

“When not starting out with initially color-degraded training, these models just don’t generalize, perhaps because of their over-reliance on specific color cues,” Lukas Vogelsang says.

The robust generalization of the developmentally inspired model is not merely a consequence of it having been trained on both color and grayscale images; the temporal ordering of these images makes a big difference.

Another object-recognition model that was trained on color images first, followed by grayscale images, did not do as well at identifying black-and-white objects.

“It’s not just the steps of the developmental choreography that are important, but also the order in which they are played out,” Sinha says.

The advantages of limited sensory input

By analyzing the internal organization of the models, the researchers found that those that begin with grayscale inputs learn to rely on luminance to identify objects. Once they begin receiving color input, they don’t change their approach very much, since they’ve already learned a strategy that works well.

Models that began with color images did shift their approach once grayscale images were introduced, but could not shift enough to make them as accurate as the models that were given grayscale images first.

A similar phenomenon may occur in the human brain, which has more plasticity early in life, and can easily learn to identify objects based on their luminance alone. Early in life, the paucity of color information may in fact be beneficial to the developing brain, as it learns to identify objects based on sparse information.

“As a newborn, the normally sighted child is deprived, in a certain sense, of color vision. And that turns out to be an advantage,” Diamond says.

Researchers in Sinha’s lab have observed that limitations in early sensory input can also benefit other aspects of vision, as well as the auditory system.

In 2022, they used computational models to show that early exposure to only low-frequency sounds, similar to those that babies hear in the womb, improves performance on auditory tasks that require analyzing sounds over a longer period of time, such as recognizing emotions.

They now plan to explore whether this phenomenon extends to other aspects of development, such as language acquisition.

Funding: The research was funded by the National Eye Institute of NIH and the Intelligence Advanced Research Projects Activity.

About this visual neuroscience research news

Author: Melanie Grados

Source: MIT

Contact: Melanie Grados – MIT

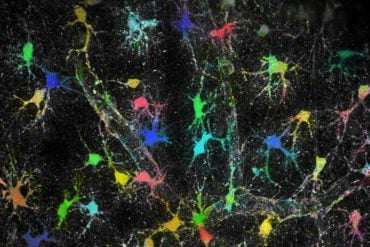

Image: The image is credited to Neuroscience News

Original Research: Closed access.

“Impact of early visual experience on later usage of color cues” by Marin Vogelsang et al. Science

Abstract

Impact of early visual experience on later usage of color cues

Human visual recognition is remarkably robust to chromatic changes. In this work, we provide a potential account of the roots of this resilience based on observations with 10 congenitally blind children who gained sight late in life.

Several months or years following their sight-restoring surgeries, the removal of color cues markedly reduced their recognition performance, whereas age-matched normally sighted children showed no such decrement.

This finding may be explained by the greater-than-neonatal maturity of the late-sighted children’s color system at sight onset, inducing overly strong reliance on chromatic cues. Simulations with deep neural networks corroborate this hypothesis.

These findings highlight the adaptive significance of typical developmental trajectories and provide guidelines for enhancing machine vision systems.