Summary: A new computational model uses the entire function of the brain rather than specific networks or areas to explain the relationship between mental processing and brain anatomy. The model aims to discover how the brain works and breaks down as a result of aging and dementia.

Source: Mayo Clinic

Mayo Clinic researchers have proposed a new model for mapping the symptoms of Alzheimer’s disease to brain anatomy. This model was developed by applying machine learning to patient brain imaging data. It uses the entire function of the brain rather than specific brain regions or networks to explain the relationship between brain anatomy and mental processing.

The findings are reported in Nature Communications.

“This new model can advance our understanding of how the brain works and breaks down during aging and Alzheimer’s disease, providing new ways to monitor, prevent and treat disorders of the mind,” says David T. Jones, M.D., a Mayo Clinic neurologist and lead author of the study.

Alzheimer’s disease typically has been described as a protein-processing problem. The toxic proteins amyloid and tau deposit in areas of the brain, causing neuron failure that results in clinical symptoms such as memory loss, difficulty communicating and confusion.

However, the relationship between clinical symptoms, patterns of brain damage and brain anatomy is not clear. People also can have more than one neurodegenerative disease, making diagnosis difficult. Mapping brain behavior with this computational model may give new perspective to clinicians.

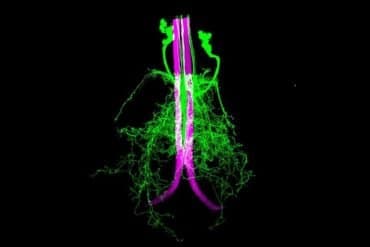

The new model was developed using brain glucose measurements from fluorodeoxyglucose positron emission tomography (FDG-PET) performed on 423 study participants who are cognitively impaired and involved with the Mayo Clinic Study of Aging and the Mayo Clinic Alzheimer’s Disease Research Center. FDG-PET is an imaging test that shows how glucose is fueling parts of the brain. Neurodegenerative diseases, such as Alzheimer’s disease, Lewy body dementia and frontotemporal dementia, for example, have different patterns of glucose use.

The model compresses complex brain anatomy relevant for dementia symptoms into a conceptual, color-coded framework that shows areas of the brain associated with neurodegenerative disorders and mental functions. Imaging patterns shown in the model relate to the symptoms patients experience.

The predictive ability of the model for changes associated with Alzheimer’s physiology was validated in 410 people. Additional validation was obtained by projecting a large amount of data from normal aging and dementia syndromes targeting memory, executive functions, language, behavior, movement, perception, semantic knowledge and visuospatial abilities.

The researchers found that 51% of the variances in glucose use patterns across the brains of patients with dementia could be explained by only 10 patterns. Each patient has a unique combination of these 10 brain glucose patterns that relate to the type of symptoms they experience. In follow-up work, Mayo Clinic’s Department of Neurology Artificial Intelligence (AI) Program, which is directed by Dr. Jones, is using these 10 patterns to work on AI systems that help interpret brain scans from patients who are being evaluated for Alzheimer’s disease and related syndromes.

“This new computational model, with more validation and support, has the potential to redirect scientific efforts to focus on dynamics in complex systems biology in the study of the mind and dementia rather than primarily focusing on misfolded proteins,” Dr. Jones says.

“If the mental functions relevant for Alzheimer’s disease are performed in a distributed manner across the entire brain, a new disease model like what we are proposing is needed. We think this model can potentially impact diagnostics, treatments and the fundamental understanding of neurodegeneration and mental functions in general.”

Co-authors — all of Mayo Clinic — are Val Lowe, M.D.; Jonathan Graff-Radford, M.D.; Hugo Botha, M.B., Ch.B.; Leland Barnard, Ph.D.; Daniela Wiepert; Matthew Murphy, Ph.D.; Melissa Murray, Ph.D.; Matthew Senjem; Jeffrey Gunter, Ph.D.; Heather Wiste; Bradley Boeve, M.D.; David Knopman, M.D.; Ronald Petersen, M.D., Ph.D.; and Clifford Jack Jr., M.D.

Funding: This research was supported by National Institutes of Health grants P30 AG62677, R01 AG011378, R01 AG041851 843, P50 AG016574, U01 AG06786, the Elsie and Marvin Dekelboum Family Foundation, the Liston Family Foundation, GHR Foundation, Alzheimer’s Association, Foundation Dr. Corinne Schuler, Race Against Dementia and Mayo Foundation for Medical Education and Research.

Dr. Jones is an inventor on a patent application describing machine learning techniques for automated clinical readings of medical images. Other authors declared no competing interests.

About this dementia and computational neuroscience research news

Author: Sue Graves-Johnson

Source: Mayo Clinic

Contact: Sue Graves-Johnson – Mayo Clinic

Image: The image is in the public domain

Original Research: Open access.

“A computational model of neurodegeneration in Alzheimer’s disease” by David T. Jones et al. Nature Communications

Abstract

A computational model of neurodegeneration in Alzheimer’s disease

Disruption of mental functions in Alzheimer’s disease (AD) and related disorders is accompanied by selective degeneration of brain regions. These regions comprise large-scale ensembles of cells organized into systems for mental functioning, however the relationship between clinical symptoms of dementia, patterns of neurodegeneration, and functional systems is not clear.

Here we present a model of the association between dementia symptoms and degenerative brain anatomy using F18-fluorodeoxyglucose PET and dimensionality reduction techniques in two cohorts of patients with AD.

This reflected a simple information processing-based functional description of macroscale brain anatomy which we link to AD physiology, functional networks, and mental abilities. We further apply the model to normal aging and seven degenerative diseases of mental functions.

We propose a global information processing model for mental functions that links neuroanatomy, cognitive neuroscience and clinical neurology.