Summary: Researchers uncovered that our brain relies more on internal predictions than sensory input when observing others’ actions.

This groundbreaking study challenges the classical view that visual perception primarily drives understanding of others’ actions. By analyzing brain activity in epilepsy patients during action observation, researchers found that the brain prefers predictions from our motor system over direct visual cues, especially in predictable sequences.

This insight reveals a predictive nature of the brain, shaping our perception from within.

Key Facts:

- The study demonstrates that the brain relies more on internal predictions than direct visual input when observing actions in a predictable sequence.

- Researchers used intracranial EEG in epilepsy patients to achieve high precision in measuring brain activity.

- Findings show a shift in information flow from premotor to visual regions in the brain, indicating a predictive rather than reactive perception process.

Source: KNAW

When we engage in social interactions, like shaking hands or having a conversation, our observation of other people’s actions is crucial. But what exactly happens in our brain during this process: how do the different brain regions talk to each other?

Researchers at the Netherlands Institute for Neuroscience provide an intriguing answer: our perception of what others do depends more on what we expect to happen than previously believed.

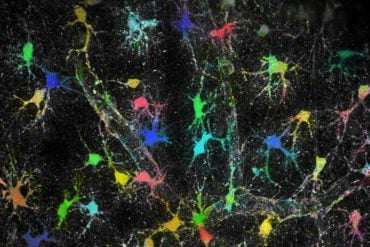

For some time, researchers have been trying to understand how our brains process other people’s actions. It is known, for example, that watching someone perform an action activates similar brain areas compared to when we perform that action ourselves.

People assumed these brain regions become activated in a particular order: seeing what others do first activates visual brain regions, then later, parietal and premotor regions we normally use to perform similar actions.

Scientists thought that this flow of information, from our eyes to our own actions, is what makes us understand what others do. This belief is based on measurements of brain activity in humans and monkeys while they watched simple actions, such as picking up a knife, presented in isolation in the lab.

In reality, actions don’t usually happen in isolation, out of the blue: they follow a predictable sequence with an end-goal in mind, like making breakfast. How does our brain deal with this?

Chaoyi Qin, Frederic Michon and their colleagues, led by Christian Keysers and Valeria Gazzola provide us with an intriguing answer: if we observe actions in such meaningful sequences, our brains increasingly ignore what comes into our eyes, and depend more on predictions of what should happen next, derived from our own motor system.

“What we would do next, becomes what our brain sees”, summarizes Christian Keysers, a senior author of the study and director of the social brain lab in the institute.

To arrive at that counterintuitive conclusion, the team, in collaboration with the Jichi Medical University in Japan, had the unique opportunity to measure brain activity directly from the brain of epilepsy patients who participated in intracranial eeg-research for medical purposes.

Such an examination involves measuring the brain’s electrical activity using electrodes that are not on the skull, but under it.

Unique opportunity

The advantage of this technique is that it is the only technique that allows to directly measure the electrical activity the brain uses to work. Clinically, it is used as a final step for medication-resistant epilepsy patients, as it can determine the exact source of epilepsy.

But while the medical team waits for epileptic seizures to occur, these patients have a period in which they have to stay in their hospital bed and have nothing to do but wait – researchers used this period as an opportunity to peak into the working of the brain with unprecedented temporal and spatial accuracy.

During the experiment, participants performed a simple task: they watched a video in which someone performed various daily actions, such as preparing breakfast or folding a shirt. During that time, their electrical brain activity could be measures through the implanted electrodes across the brain regions involved in action observation to examine how they talk to each other.

Two different conditions were tested, resulting in differing brain activity while watching. In one, the video was shown – as we would normally see the action unfold every morning – in its natural sequence: you see someone pick a bread-roll, then a knife, then cut open the roll, then scoop some butter etc.; in the other, these individual acts were re-shuffled into a random order.

People saw the exact same actions in the two conditions, but only in the natural order, can their brain utilize its knowledge of how it would butter a bread-roll to predict what action comes next.

Different flow of information

Using sophisticated analyses in collaboration with Pascal Fries of the Ernst Strüngmann Institute (ESI) in Germany, what the team could reveal is that when participants viewed the reshuffled, unpredictable sequence, the brain indeed had an information flow going from visual brain regions, thought to describe what the eye is seeing, to parietal and premotor regions, that also controls our own actions – just as the classical model predicted. But when participants could view the natural sequences, the activity changed dramatically.

“Now, information was actually flowing from the premotor regions, that know how we prepare breakfast ourselves, down to the parietal cortex, and suppressed activity in the visual cortex”, explains Valeria Gazzola.

“It is as if they stopped to see with their eyes, and started to see what they would have done themselves”.

Their finding is part of wider realization in the neuroscience community, that our brain does not simply react to what comes in through our senses. Instead, we have a predictive brain, that permanently predicts what comes next. The expected sensory input is then suppressed. We see the world from the inside out, rather than from the outside in.

Of course, if what we see violates our expectations, the expectation-driven suppression fails, and we become aware of what we actually see rather than what we expected to see.

About this neuroscience research news

Author: Eline Feenstra

Source: KNAW

Contact: Eline Feenstra – KNAW

Image: The image is credited to Neuroscience News

Original Research: Open access.

“Predictability alters information flow during action observation in human electrocorticographic activity” by Christian Keysers et al. Cell Reports

Abstract

Predictability alters information flow during action observation in human electrocorticographic activity

Highlights

- iEEG can track direction of information flow while viewing natural action sequences

- Embedding acts in predictable sequences increase premotor→parietal beta information flow

- The generated expectations suppress broadband gamma activity in occipital cortices

- This supports the notion of predictive coding in the action observation network

Summary

The action observation network (AON) has been extensively studied using short, isolated motor acts. How activity in the network is altered when these isolated acts are embedded in meaningful sequences of actions remains poorly understood.

Here we utilized intracranial electrocorticography to characterize how the exchange of information across key nodes of the AON—the precentral, supramarginal, and visual cortices—is affected by such embedding and the resulting predictability.

We found more top-down beta oscillation from precentral to supramarginal contacts during the observation of predictable actions in meaningful sequences compared to the same actions in randomized, and hence less predictable, order.

In addition, we find that expectations enabled by the embedding lead to a suppression of bottom-up visual responses in the high-gamma range in visual areas.

These results, in line with predictive coding, inform how nodes of the AON integrate information to process the actions of others.