Understanding speech not just a matter of believing one’s ears.

Even if we just hear part of what someone has said, when we are familiar with the context, we automatically add the missing information ourselves. Researchers from the Max Planck Institute for Empirical Aesthetics in Frankfurt and the Max Planck Institute for Cognitive and Brain Sciences in Leipzig have now succeeded in demonstrating how we do this.

Incomplete utterances are something we constantly encounter in everyday communication. Individual sounds and occasionally entire words fall victim to fast speech rates or imprecise articulation. In poetic language, omissions are used as a stylistic device or are the necessary outcome of the use of regular metre or rhyming syllables. In both cases, our comprehension of the spoken content is only slightly impaired or, in most cases, not affected at all.

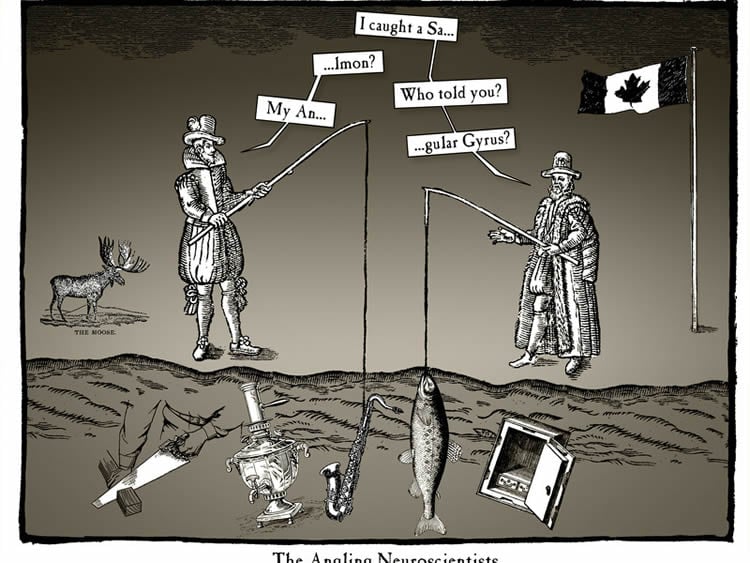

The results of previous linguistic research suggest that language is particularly resilient to omissions when the linguistic information can be predicted both in terms of its content and phonetics. The most probable ending to the sentence: “The fisherman was in Norway and caught a sal…” is the word “salmon”. Accordingly, due to its predictability, this sentence-ending word should be able to accommodate the omission of the “m” and “on” sounds.

Mathias Scharinger, a neurolinguist at the Max Planck Institute for Empirical Aesthetics in Frankfurt, was particularly interested in identifying the regions of the brain that facilitate the understanding of incomplete words in contexts that allow such predictions. Together with colleagues from the Max Planck Institute for Cognitive and Brain Sciences and the Universities of Chemnitz and Lübeck, Scharinger presented test subjects with sentences in which the last word could be predicted and which included a word fragment with the end consonants omitted (e.g. “sal”). The test subjects also heard complete sentences as well as sentences in which it was not possible to deduce the last word from the context, for example: “At that moment he gave no thought whatsoever to the salmon”. The listening presentation was made while the test subjects lay in a MRI scanner that recorded the neuronal activity in their brains.

The study’s findings show that one region of the brain, the left angular gyrus, responds in a special way to the presentation of incomplete predictable words. This structure in the parietal lobe of the human brain supports the interpretation of meaningful sentences and is considered an important sub-area of the neural language network. The interaction pattern was characterized by the fact that the response to incomplete words did not differ from the response to complete words if they arose in contexts that facilitated prediction. However, when it was not possible to predict the last word in the sentence, the angular gyrus reacted more strongly to incomplete words than complete ones, and thus presumably registered the omission of the consonants in the word “salmon”.

The researchers, headed by Mathias Scharinger, interpreted this interaction pattern as proof, that, first, the understanding of incomplete language benefits from contexts that allow prediction and, second, this benefit is supported by the angular gyrus in particular. Thus, this region of the brain appears to enable the integration of prior knowledge with the heard sensory language signal and makes an essential contribution to successful hearing. Accordingly, based on the findings of the study, interesting hypotheses can be made about the neural processing of aesthetically motivated language omission. It is intended to focus on this aspect in future research to be carried out at the Max Planck Institute for Empirical Aesthetics.

Source: Dr. Mathias Scharinger – Max Planck Institute

Image Credit: The image is credited to MPI for Empirical Aesthetics

Original Research: Abstract for “Predictions interact with missing sensory evidence in semantic processing areas” by Scharinger, M., Bendixen, A., Herrmann, B., Henry, M.J., Mildner, T., and Obleser, J in Brain Mapping. Published online November 19 2015 doi:10.1002/hbm.23060

Abstract

Predictions interact with missing sensory evidence in semantic processing areas

Human brain function draws on predictive mechanisms that exploit higher-level context during lower-level perception. These mechanisms are particularly relevant for situations in which sensory information is compromised or incomplete, as for example in natural speech where speech segments may be omitted due to sluggish articulation. Here, we investigate which brain areas support the processing of incomplete words that were predictable from semantic context, compared with incomplete words that were unpredictable. During functional magnetic resonance imaging (fMRI), participants heard sentences that orthogonally varied in predictability (semantically predictable vs. unpredictable) and completeness (complete vs. incomplete, i.e. missing their final consonant cluster). The effects of predictability and completeness interacted in heteromodal semantic processing areas, including left angular gyrus and left precuneus, where activity did not differ between complete and incomplete words when they were predictable. The same regions showed stronger activity for incomplete than for complete words when they were unpredictable. The interaction pattern suggests that for highly predictable words, the speech signal does not need to be complete for neural processing in semantic processing areas

“Predictions interact with missing sensory evidence in semantic processing areas” by Scharinger, M., Bendixen, A., Herrmann, B., Henry, M.J., Mildner, T., and Obleser, J in Brain Mapping. Published online November 19 2015 doi:10.1002/hbm.23060