Summary: A new study reveals a significant challenge in distinguishing real from AI-generated images, with only 61% of participants accurately identifying them—far below the anticipated 85%.

The study involved 260 people evaluating 20 photos, half sourced from Google and half produced by AI programs like Stable Diffusion or DALL-E, focusing on details like fingers, eyes, and teeth to make their judgments. This difficulty highlights the rapid evolution of AI technology, outpacing academic research and legislation, and underscores the growing potential for misuse in disinformation campaigns.

The study emphasizes the need for robust tools to combat this emerging “AI arms race,” as AI-generated images become increasingly realistic and challenging to differentiate.

Key Facts:

- Lower-than-Expected Accuracy: Participants could only correctly identify real vs. AI-generated images 61% of the time, significantly below researchers’ expectations.

- Rapid Technological Advancements: The pace of AI development is outstripping the ability of legislation and academic research to keep up, leading to more realistic AI-generated images.

- Disinformation Threat: The study points to the potential use of AI-generated images for malicious purposes, especially in fabricating content about public figures, indicating a critical need for detection and countermeasures.

Source: University of Waterloo

If you recently had trouble figuring out if an image of a person is real or generated through artificial intelligence (AI), you’re not alone.

A new study from University of Waterloo researchers found that people had more difficulty than was expected distinguishing who is a real person and who is artificially generated.

The Waterloo study saw 260 participants provided with 20 unlabeled pictures: 10 of which were of real people obtained from Google searches, and the other 10 generated by Stable Diffusion or DALL-E, two commonly used AI programs that generate images.

Participants were asked to label each image as real or AI-generated and explain why they made their decision. Only 61 per cent of participants could tell the difference between AI-generated people and real ones, far below the 85 per cent threshold that researchers expected.

“People are not as adept at making the distinction as they think they are,” said Andreea Pocol, a PhD candidate in Computer Science at the University of Waterloo and the study’s lead author.

Participants paid attention to details such as fingers, teeth, and eyes as possible indicators when looking for AI-generated content – but their assessments weren’t always correct.

Pocol noted that the nature of the study allowed participants to scrutinize photos at length, whereas most internet users look at images in passing.

“People who are just doomscrolling or don’t have time won’t pick up on these cues,” Pocol said.

Pocol added that the extremely rapid rate at which AI technology is developing makes it particularly difficult to understand the potential for malicious or nefarious action posed by AI-generated images. The pace of academic research and legislation isn’t often able to keep up: AI-generated images have become even more realistic since the study began in late 2022.

These AI-generated images are particularly threatening as a political and cultural tool, which could see any user create fake images of public figures in embarrassing or compromising situations.

“Disinformation isn’t new, but the tools of disinformation have been constantly shifting and evolving,” Pocol said.

“It may get to a point where people, no matter how trained they will be, will still struggle to differentiate real images from fakes. That’s why we need to develop tools to identify and counter this. It’s like a new AI arms race.”

About this AI art and neuroscience research news

Author: Ryon Jones

Source: University of Waterloo

Contact: Ryon Jones – University of Waterloo

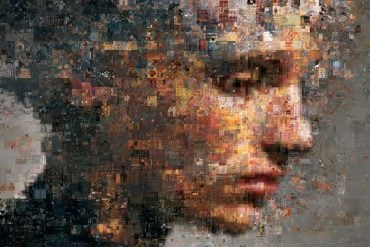

Image: The image is credited to Neuroscience News

Original Research: Open access.

“Seeing is No Longer Believing: A Survey on the State of Deepfakes, AI-Generated Humans, and Other Nonveridical Media” by Andreea Pocol et al. Advances in Computer Graphics

Abstract

Seeing is No Longer Believing: A Survey on the State of Deepfakes, AI-Generated Humans, and Other Nonveridical Media

Did you see that crazy photo of Chris Hemsworth wearing a gorgeous, blue ballgown? What about the leaked photo of Bernie Sanders dancing with Sarah Palin?

If these don’t sound familiar, it’s because these events never happened–but with text-to-image generators and deepfake AI technologies, it is effortless for anyone to produce such images.

Over the last decade, there has been an explosive rise in research papers, as well as tool development and usage, dedicated to deepfakes, text-to-image generation, and image synthesis.

These tools provide users with great creative power, but with that power comes “great responsibility;” it is just as easy to produce nefarious and misleading content as it is to produce comedic or artistic content.

Therefore, given the recent advances in the field, it is important to assess the impact they may have. In this paper, we conduct meta-research on deepfakes to visualize the evolution of these tools and paper publications. We also identify key authors, research institutions, and papers based on bibliometric data.

Finally, we conduct a survey that tests the ability of participants to distinguish photos of real people from fake, AI-generated images of people.

Based on our meta-research, survey, and background study, we conclude that humans are falling behind in the race to keep up with AI, and we must be conscious of the societal impact.