Summary: A new AI system is able to automatically assign a gender to a person based on an image of a smile. Researchers report female smiles are more expansive than male smiles.

Source: University of Bradford.

The dynamics of how men and women smile differs measurably, according to new research, enabling artificial intelligence (AI) to automatically assign gender purely based on a smile.

Although automatic gender recognition is already available, existing methods use static images and compare fixed facial features. The new research, by the University of Bradford, is the first to use the dynamic movement of the smile to automatically distinguish between men and women.

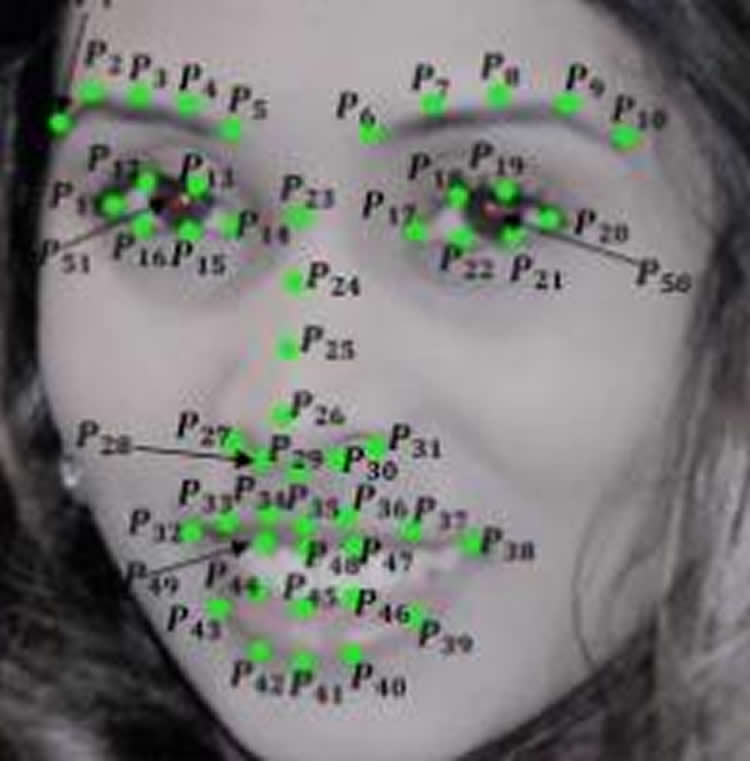

Led by Professor Hassan Ugail, the team mapped 49 landmarks on the face, mainly around the eyes, mouth and down the nose. They used these to assess how the face changes as we smile caused by the underlying muscle movements – including both changes in distances between the different points and the ‘flow’ of the smile: how much, how far and how fast the different points on the face moved as the smile was formed.

They then tested whether there were noticeable differences between men and women – and found that there were, with women’s smiles being more expansive.

Lead researcher, Professor Hassan Ugail from the University of Bradford said: “Anecdotally, women are thought to be more expressive in how they smile, and our research has borne this out. Women definitely have broader smiles, expanding their mouth and lip area far more than men.”

The team created an algorithm using their analysis and tested it against video footage of 109 people as they smiled. The computer was able to correctly determine gender in 86% of cases and the team believe the accuracy could easily be improved.

“We used a fairly simple machine classification for this research as we were just testing the concept, but more sophisticated AI would improve the recognition rates,” said Professor Ugail.

The underlying purpose of this research is more about trying to enhance machine learning capabilities, according to the team, but it has raised a number of intriguing questions that they hope to investigate in future projects.

One is how the machine might respond to the smile of a transgender person and the other is the impact of plastic surgery on recognition rates.

“Because this system measures the underlying muscle movement of the face during a smile, we believe these dynamics will remain the same even if external physical features change, following surgery for example,” said Professor Ugail. “This kind of facial recognition could become a next- generation biometric, as it’s not dependent on one feature, but on a dynamic that’s unique to an individual and would be very difficult to mimic or alter.”

Source: University of Bradford

Publisher: Organized by NeuroscienceNews.com.

Image Source: NeuroscienceNews.com image is credited to the researchers.

Original Research: Open access research in The Visual Computer: International Journal of Computer Graphics.

doi:10.1007/s00371-018-1494-x

[cbtabs][cbtab title=”MLA”]University of Bradford “Is Your Smile Male or Female?.” NeuroscienceNews. NeuroscienceNews, 15 March 2018.

<https://neurosciencenews.com/male-female-smile-8641/>.[/cbtab][cbtab title=”APA”]University of Bradford (2018, March 15). Is Your Smile Male or Female?. NeuroscienceNews. Retrieved March 15, 2018 from https://neurosciencenews.com/male-female-smile-8641/[/cbtab][cbtab title=”Chicago”]University of Bradford “Is Your Smile Male or Female?.” https://neurosciencenews.com/male-female-smile-8641/ (accessed March 15, 2018).[/cbtab][/cbtabs]

Abstract

Is gender encoded in the smile? A computational framework for the analysis of the smile driven dynamic face for gender recognition

Automatic gender classification has become a topic of great interest to the visual computing research community in recent times. This is due to the fact that computer-based automatic gender recognition has multiple applications including, but not limited to, face perception, age, ethnicity, identity analysis, video surveillance and smart human computer interaction. In this paper, we discuss a machine learning approach for efficient identification of gender purely from the dynamics of a person’s smile. Thus, we show that the complex dynamics of a smile on someone’s face bear much relation to the person’s gender. To do this, we first formulate a computational framework that captures the dynamic characteristics of a smile. Our dynamic framework measures changes in the face during a smile using a set of spatial features on the overall face, the area of the mouth, the geometric flow around prominent parts of the face and a set of intrinsic features based on the dynamic geometry of the face. This enables us to extract 210 distinct dynamic smile parameters which form as the contributing features for machine learning. For machine classification, we have utilised both the Support Vector Machine and the k-Nearest Neighbour algorithms. To verify the accuracy of our approach, we have tested our algorithms on two databases, namely the CK+ and the MUG, consisting of a total of 109 subjects. As a result, using the k-NN algorithm, along with tenfold cross validation, for example, we achieve an accurate gender classification rate of over 85%. Hence, through the methodology we present here, we establish proof of the existence of strong indicators of gender dimorphism, purely in the dynamics of a person’s smile.