Study captures how 2 brain systems in mammals cooperate to capture sounds and process information.

When people hear the sound of footsteps or the drilling of a woodpecker, the rhythmic structure of the sounds is striking, says Michael Wehr, a professor of psychology at the University of Oregon.

Even when the temporal structure of a sound is less obvious, as with human speech, the timing still conveys a variety of important information, he says. When a sound is heard, neurons in the lower subcortical region of the brain fire in sync with the rhythmic structure of the sound, almost exactly encoding its original structure in the timing of spikes.

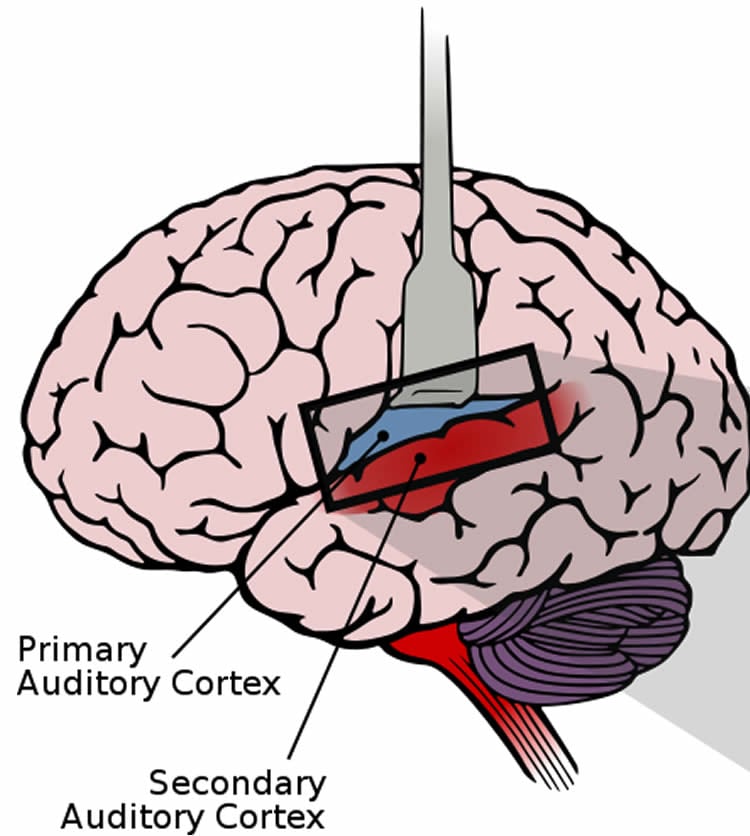

“As the information progresses towards the auditory cortex, however, the representation of sound undergoes a transformation,” said Wehr, a researcher in the UO’s Institute of Neuroscience. “There is a gradual shift towards neurons that use an entirely different system for encoding information.”

In a new study, detailed in the April 8 issue of the journal Neuron, Wehr’s team documented this transformation of information in the auditory system of rats. The findings are similar to those previously shown in primates, suggesting that the processes involved are at work in the auditory systems of all mammals, the team concluded.

Neurons in the brain use two different languages to encode information: temporal coding and rate coding.

For neurons in the auditory thalamus, the part of the brain that relays information from the ears to the auditory cortex, this takes the form of temporal coding. Neurons fire in sync with the original sound, providing an exact replication of the sound’s structure in time.

In the auditory cortex, however, about half the neurons use rate coding, which instead conveys the structure of the sound through the density and rate of the neurons’ spiking, rather than the exact timing.

But how does the transformation from one coding system to another take place?

To find the answer, Wehr and an undergraduate student — lead author Xiang Gao, now a medical student at the Oregon Health & Science University– used a technique known as whole-cell recording in their rat models to capture the thousands of interactions that take place within a single neuron each time it responds to a sound. The team observed how individual cells responded to a steady of stream of rhythmic clicks.

During the study, Wehr and Gao noted that individual rate-coding neurons received up to 82 percent of their inputs from temporal-coding neurons.

“This means that these neurons are acting like translators, converting a sound from one language to another,” Wehr said. “By peering inside a neuron, we can see the mechanism for how the translation is taking place.”

One of these mechanisms is the way that so-called excitatory and inhibitory neurons cooperate to push and pull together, like parents pushing a playground teeter-totter. In response to each click, excitatory neurons first push on a cell and then inhibitory neurons follow with a pull exactly out of phase with the excitatory neurons. Together, the combination drives cells to fire spikes at a high rate, converting the temporal code into a rate code.

The observation provides a glimpse into how circuits deep within the brain give rise to how the world is perceived, Wehr said. Neuroscientists previously have speculated that the transformation from temporal coding to rate coding may explain the perceptual boundary experienced between rhythm and pitch. Slow trains of clicks sound rhythmic, but fast trains of clicks sound like a buzzy tone.

It could be that these two very different experiences of sound are produced by the two different kinds of neurons, Wehr said.

In the UO study, synchronized neurons, using temporal coding, tracked a click train up to about 20 clicks per second, at which point the non-synchronized, rate-coding neurons began to take over. These non-synchronized neurons could respond to faster speeds — up to about 500 clicks per second — but with a rate code in which neurons fire in a random and disconnected pattern.

Why would the auditory system switch representations? The answer, Wehr said, may lie in the visual cortex, which also uses rate coding.

“This transformation in the auditory system is similar to what has been observed in the visual system,” he said. “Except that in the auditory system, neurons are encoding information about time instead of about space.”

Neuroscientists believe rate codes could support multisensory integration in the higher cortical areas, Wehr said. A rate code, he said, could be a universal language, helping us put together what people see and hear.

Funding: The National Institutes of Health funded the study (1R01-DC-011379).

Source: Andrew Stiefel – University of Oregon

Image Credit: The image is adapted from an image credited to Chittka L, Brockmann/PLOS Biology. Licensed Creative Commons Attribution-Share Alike 2.5 Generic

Original Research: Abstract for “A Coding Transformation for Temporally Structured Sounds within Auditory Cortical Neurons” by Xiang Gao and Michael Wehr in Neuron. Published online March 26 2015 doi:10.1016/j.neuron.2015.03.004

Abstract

A Coding Transformation for Temporally Structured Sounds within Auditory Cortical Neurons

Highlights

•In cortex the coding strategy for sounds shifts from a temporal code to a rate code

•We compare the coding strategies of synaptic input and spiking output in awake rats

•Synaptic input can be temporal coded even when outputs are rate-coded

•This thalamocortical coding transformation can therefore be observed within neurons

Summary

Although the coding transformation between visual thalamus and cortex has been known for over 50 years, whether a similar transformation occurs between auditory thalamus and cortex has remained elusive. Such a transformation may occur for time-varying sounds, such as music or speech. Most subcortical neurons explicitly encode the temporal structure of sounds with the temporal structure of their activity, but many auditory cortical neurons instead use a rate code. The mechanisms for this transformation from temporal code to rate code have remained unknown. Here we report that the membrane potential of rat auditory cortical neurons can show stimulus synchronization to rates up to 500 Hz, even when the spiking output does not. Synaptic inputs to rate-coding neurons arose in part from temporal-coding neurons but were transformed by voltage-dependent properties and push-pull excitatory-inhibitory interactions. This suggests that the transformation from temporal to rate code can be observed within individual cortical neurons.

“A Coding Transformation for Temporally Structured Sounds within Auditory Cortical Neurons” by Xiang Gao and Michael Wehr in Neuron. Published online March 26 2015 doi:10.1016/j.neuron.2015.03.004