Summary: An AI-controlled robotic limb driven by animal-like tendons can be tripped and recover before the next foot step, though not programmed to do so.

Source: University of Southern California

New AI algorithms could allow robots to learn to move by themselves, imitating animals.

For a newborn giraffe or wildebeest, being born can be a perilous introduction to the world–predators lie in wait for an opportunity to make a meal of the herd’s weakest member. This is why many species have evolved ways for their juveniles to find their footing within minutes of birth.

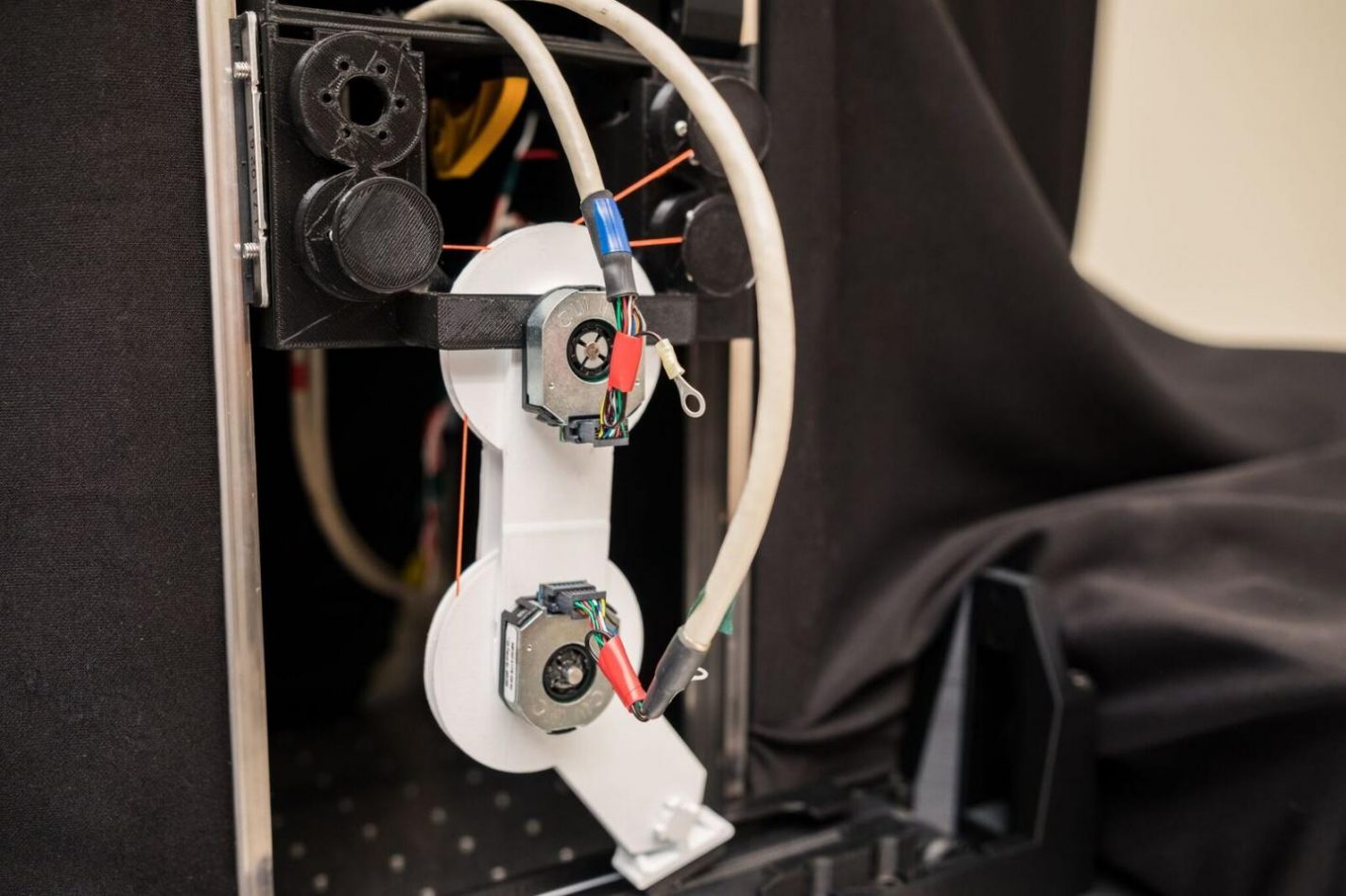

It’s an astonishing evolutionary feat that has long inspired biologists and roboticists–and now a team of USC researchers at the USC Viterbi School of Engineering believe they have become the first to create an AI-controlled robotic limb driven by animal-like tendons that can even be tripped up and then recover within the time of the next footfall, a task for which the robot was never explicitly programmed to do.

Francisco J. Valero-Cuevas, a professor of Biomedical Engineering a professor of Biokinesiology & Physical Therapy at USC in a project with USC Viterbi School of Engineering doctoral student Ali Marjaninejad and two other doctoral students–Dario Urbina-Melendez and Brian Cohn, have developed a bio-inspired algorithm that can learn a new walking task by itself after only 5 minutes of unstructured play, and then adapt to other tasks without any additional programming.

Their article, outlined in the March cover article of Nature Machine Intelligence, opens exciting possibilities for understanding human movement and disability, creating responsive prosthetics, and robots that can interact with complex and changing environments like space exploration and search-and-rescue.

“Nowadays, it takes the equivalent of months or years of training for a robot to be ready to interact with the world, but we want to achieve the quick learning and adaptations seen in nature,” said senior author Valero-Cuevas, who also has appointments in computer science, electrical and computer engineering, mechanical and aerospace engineering and neuroscience at USC.

Marjaninejad, a doctoral candidate in the Department of Biomedical Engineering at USC, and the paper’s lead author, said this breakthrough is akin to the natural learning that happens in babies. Marjaninejad explains, the robot was first allowed to understand its environment in a process of free play (or what is known as ‘motor babbling’).

“These random movements of the leg allow the robot to build an internal map of its limb and its interactions with the environment,” said Marjaninejad.

The paper’s authors say that, unlike most current work, their robots learn-by-doing, and without any prior or parallel computer simulations to guide learning.

Marjaninejad also added this is particularly important because programmers can predict and code for multiple scenarios, but not for every possible scenario–thus pre-programmed robots are inevitably prone to failure.

“However, if you let these [new] robots learn from relevant experience, then they will eventually find a solution that, once found, will be put to use and adapted as needed. The solution may not be perfect, but will be adopted if it is good enough for the situation. Not every one of us needs or wants–or is able to spend the time and effort– to win an Olympic medal,” Marjaninejad says.

Through this process of discovering their body and environment, the robot limbs designed at Valero Cuevas’ lab at USC use their unique experience to develop the gait pattern that works well enough for them, producing robots with personalized movements. “You can recognize someone coming down the hall because they have a particular footfall, right?” Valero-Cuevas asks. “Our robot uses its limited experience to find a solution to a problem that then becomes its personalized habit, or ‘personality’–We get the dainty walker, the lazy walker, the champ… you name it.”

The potential applications for the technology are many, particularly in assistive technology, where robotic limbs and exoskeletons that are intuitive and responsive to a user’s personal needs would be invaluable to those who have lost the use of their limbs. “Exoskeletons or assistive devices will need to naturally interpret your movements to accommodate what you need,” Valero-Cuevas said.

“Because our robots can learn habits, they can learn your habits, and mimic your movement style for the tasks you need in everyday life–even as you learn a new task, or grow stronger or weaker.”

According to the authors, the research will also have strong applications in the fields of space exploration and rescue missions, allowing for robots that do what needs to be done without being escorted or supervised as they venture into a new planet, or uncertain and dangerous terrain in the wake of natural disasters. These robots would be able to adapt to low or high gravity, loose rocks one day and mud after it rains, for example.

The paper’s two additional authors, doctoral students Brian Cohn and Dario Urbina-Melendez weighed in on the research:

“The ability for a species to learn and adapt their movements as their bodies and environments change has been a powerful driver of evolution from the start,” said Cohn, a doctoral candidate in computer science at the USC Viterbi School of Engineering. “Our work constitutes a step towards empowering robots to learn and adapt from each experience, just as animals do.”

“I envision muscle-driven robots, capable of mastering what an animal takes months to learn, in just a few minutes,” said Urbina-Melendez, a doctoral candidate in biomedical engineering who believes in the capacity for robotics to take bold inspiration from life. “Our work combining engineering, AI, anatomy and neuroscience is a strong indication that this is possible.”

This research was funded by the National Institutes of Health, DoD, and DARPA. For additional information, please visit the companion site Valero Lab

Source:

University of Southern California

Media Contacts:

Amy Blumenthal – USC

Image Source:

Credit to Matthew Lin

Original Research:

“Autonomous functional movements in a tendon-driven limb via limited experience” by Ali Marjaninejad, Darío Urbina-Meléndez, Brian A. Cohn & Francisco J. Valero-Cuevas

Nature Machine Intelligence volume 1, pages 144–154 (2019)

DOI:

10.1038/s42256-019-0029-0

Abstract

Autonomous functional movements in a tendon-driven limb via limited experience

Robots will become ubiquitously useful only when they require just a few attempts to teach themselves to perform different tasks, even with complex bodies and in dynamic environments. Vertebrates use sparse trial and error to learn multiple tasks, despite their intricate tendon-driven anatomies, which are particularly hard to control because they are simultaneously nonlinear, under-determined and over-determined. We demonstrate—in simulation and hardware—how a model-free, open-loop approach allows few-shot autonomous learning to produce effective movements in a three-tendon two-joint limb. We use a short period of motor babbling (to create an initial inverse map) followed by building functional habits by reinforcing high-reward behaviour and refinements of the inverse map in a movement’s neighbourhood. This biologically plausible algorithm, which we call G2P (general to particular), can potentially enable quick, robust and versatile adaptation in robots as well as shed light on the foundations of the enviable functional versatility of organisms.